I like going down rabbitholes! That’s where I learn the most

Context

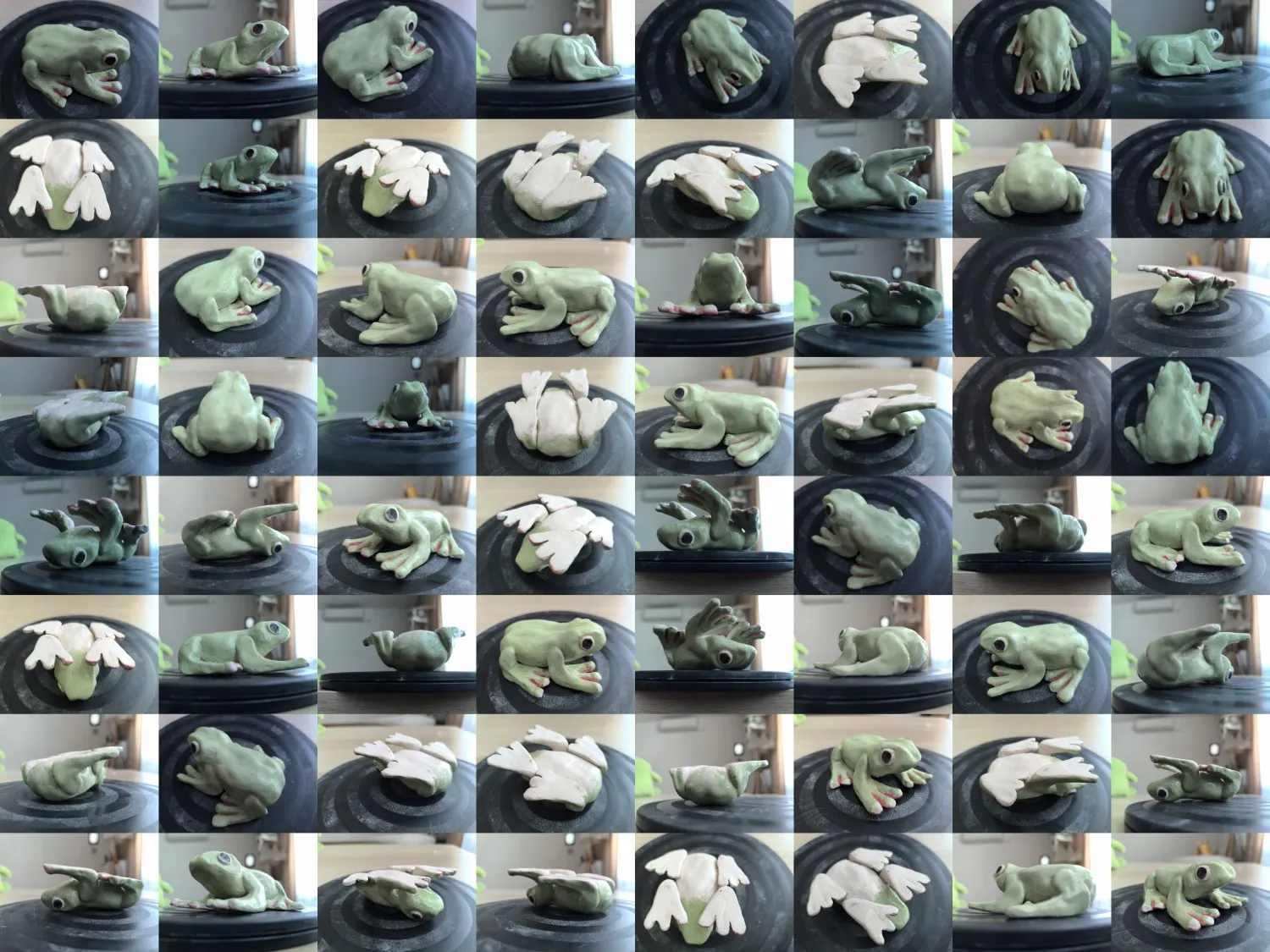

I have some toys & souvenirs I want to 3D scan - for example, this frog I actually made myself in a ceramics studio

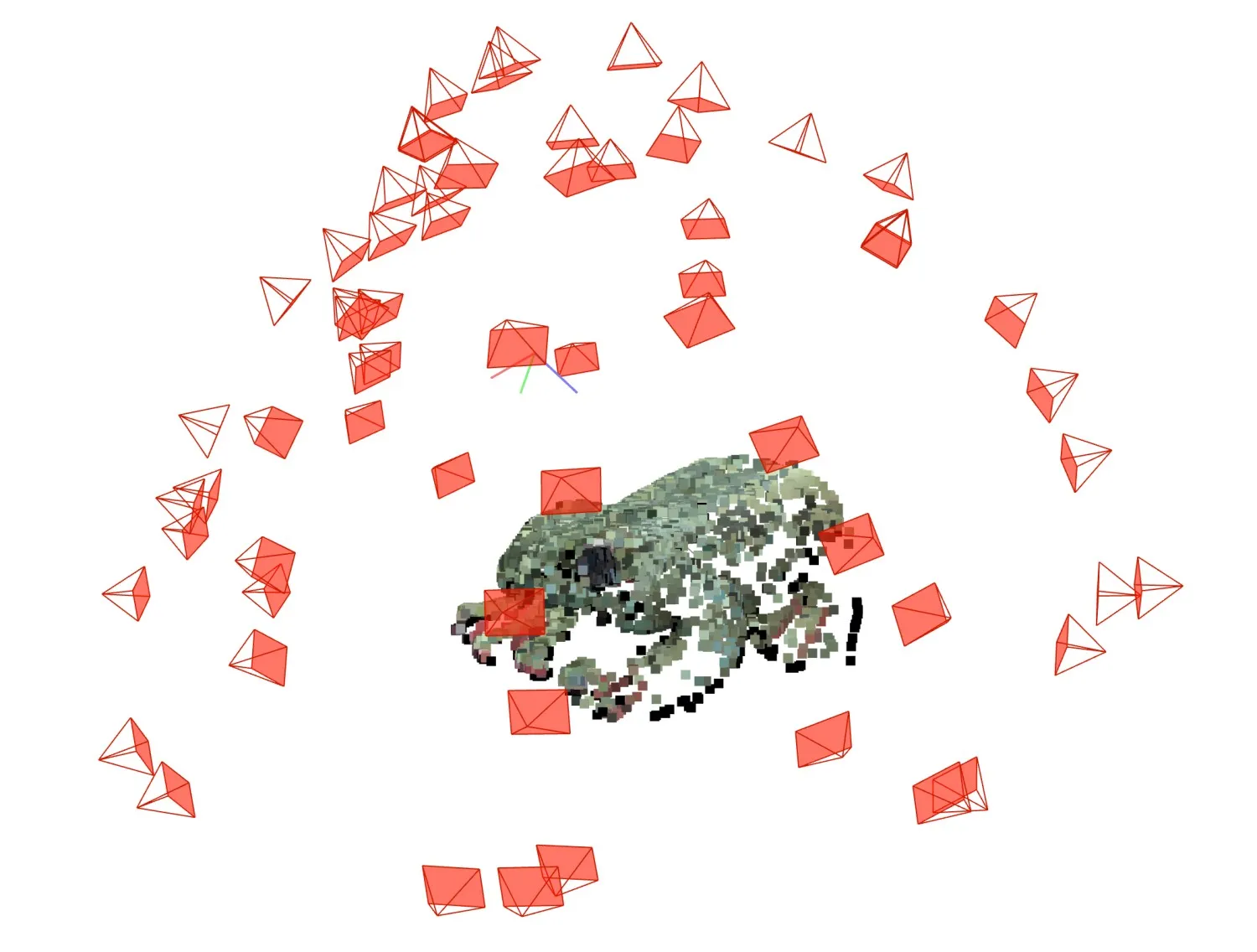

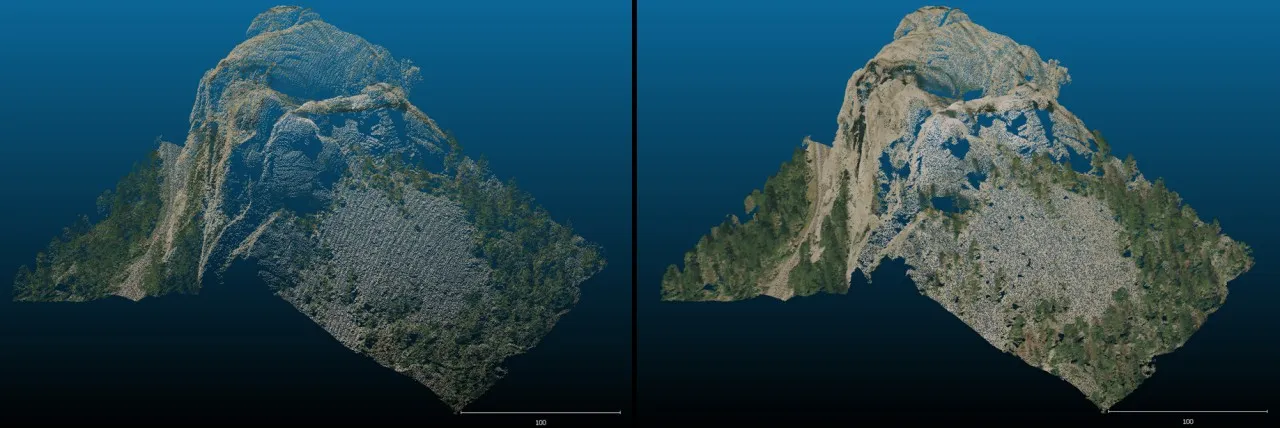

I’m using Colmap to estimate all parameters of the photos & get a rough estimate of the pointcloud, but then I need an MVS program to densify the pointcloud

OpenMVS is a good one, but it requires strictly CUDA for executing Pointcloud Densification - and I want to do everything locally on my Mac M3 (no CUDA 😔)

Turns out there’s such a difference in the execution model for Metal vs CUDA, that it’s not just “rewriting a CUDA kernel to Metal”, it would require a whole reengineering of the approach. Well at least that’s what Claude told me

So I thought… do I really trust Claude? I decided I wanted to have a deeper understanding of how OpenMVS works (and then how programming for Apple Silicon looks) to decide for myself whether it’s really that hard of a task

Diving in

OpenMVS

Went ahead to the OpenMVS paper — first thing I realized — I need a solid grasp on PatchMatch, so that’s where I went

(Any other progress on reading OpenMVS is outside of the scope of this post lol)

PatchMatch

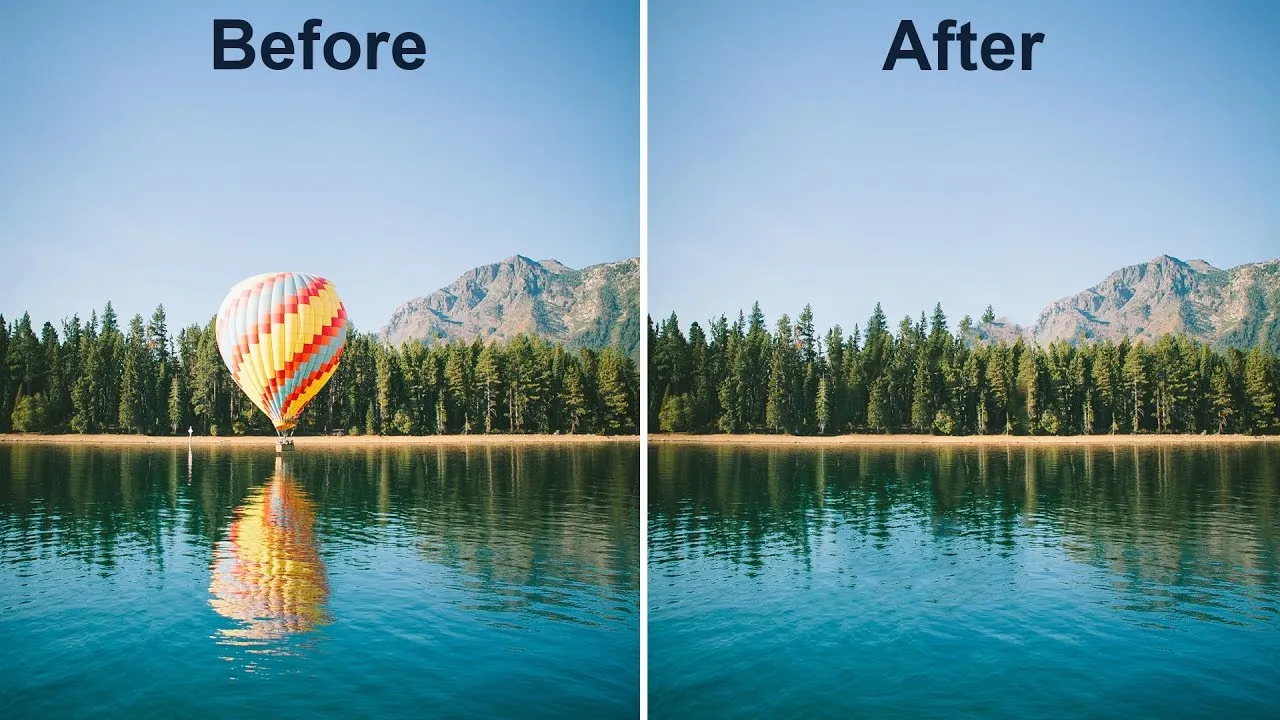

It wasn’t at all what I expected! I thought the original PatchMatch was also intended for MVS, but turned out it was for image editing - specifically tools like Content-aware Fill!

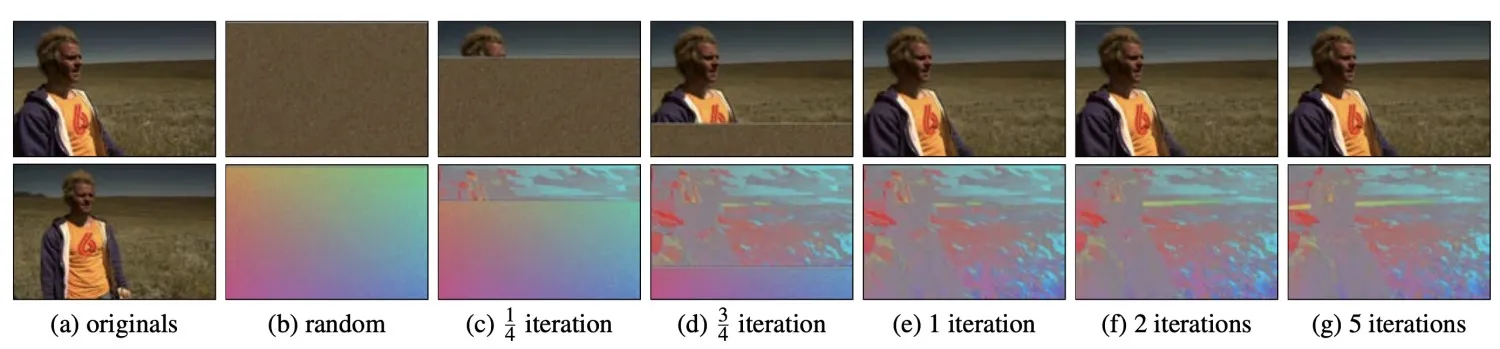

PatchMatch proposed a very efficient way to find matches between patches (haha) which wasn’t bruteforce. Though it was pretty simple - it assumed both patches were ailgned and we could match them pixel-by-pixel with just a single offset value

The algorithm is interesting - I feel like we can draw a parallel to RANSAC - we’re replacing bruteforce searching with some random sampling + fit metric, in PatchMatch we also have very efficient propagation

But the efficient algorithm is the main thing that PatchMatch did, and it wasn’t actually the paper that proposed using these matches for image editing - it was Summarizing visual data using bidirectional similarity

Bidirectional similarity

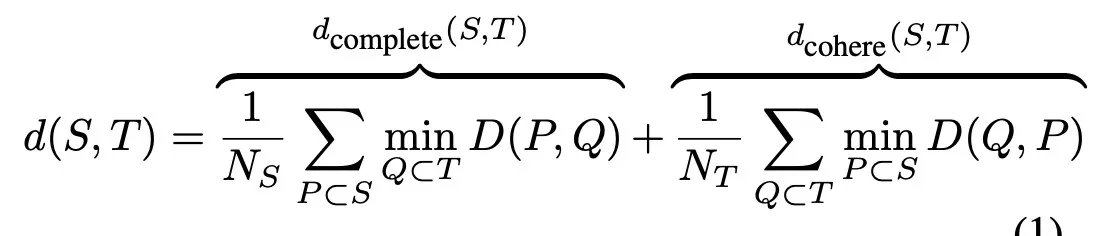

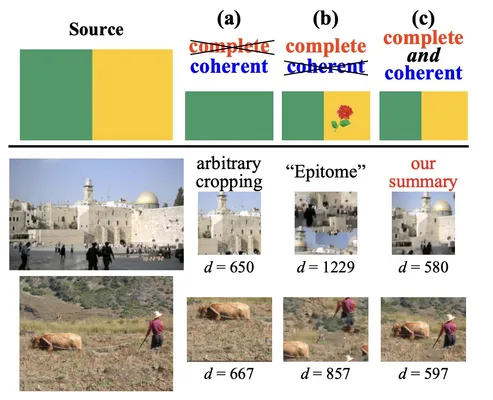

Core idea - it defined a “image similarity” function which evaluated whether the contents of two images are identical - by compared patches at varying scales.

I.e. if an image has all the same objects but just in a different arrangement, the similarity is satisfied

Then we can do stuff like:

- Image summarization - generate an image that is 3x smaller but contains all the same content

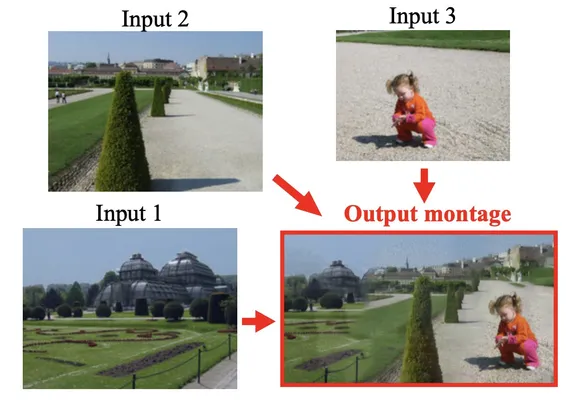

- Photo merging - we just concatenate images and then optimize for similarity to all input images - then the discontinuities become some kind of merging

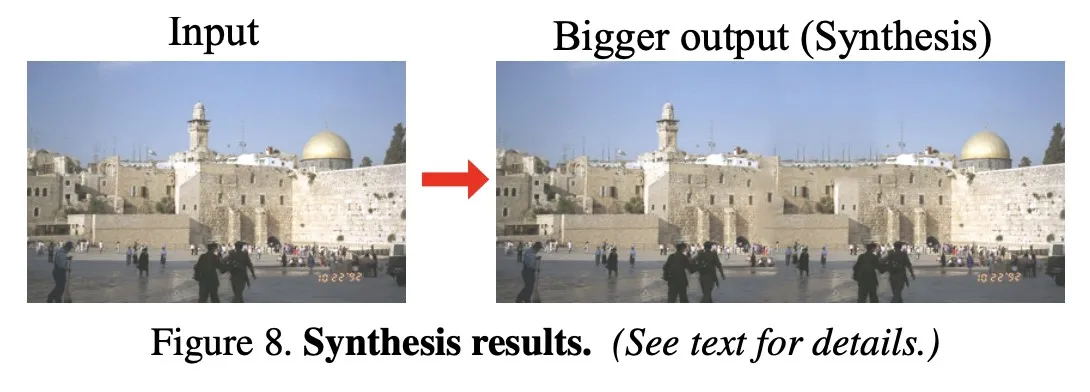

- Image synthesis - we stretch the image & fill in the remaining things optimizing for coherence

I was reeeeally surprising that such simple formulas lead to such good basic image processing tools. Ofc this is very slow, and also performs terrible on harder cases, but this is very interesting!!! Pre-AI image fill

And in its algorithm results in compared with Seam Carving which turned out to be very interesting!

Seam Carving

I was amazed at the algorithm!!! It’s so stunningly simple yet so beautiful in how it works

The idea is so simple I didn’t even have to look at the paper after I saw the animation of how it works. And there’s this website that lets you try it out: https://www.aryan.app/seam-carving/